Monocular Visual Odometry in ROS2

The Problem

Most robots that need to know where they are rely on a depth camera, a LiDAR, or GPS. What if you only have a single, plain camera?

Monocular visual odometry is the problem of estimating a camera’s motion through 3D space using only a stream of 2D images. It’s a hard problem for a fundamental reason: a single image contains no depth information. The same scene can be explained by a small object close to the camera or a large object far away — you can’t tell from one frame alone.

The goal of this project was to build a working VO system from scratch in ROS2, understanding each piece of the pipeline rather than treating it as a black box.

Architecture

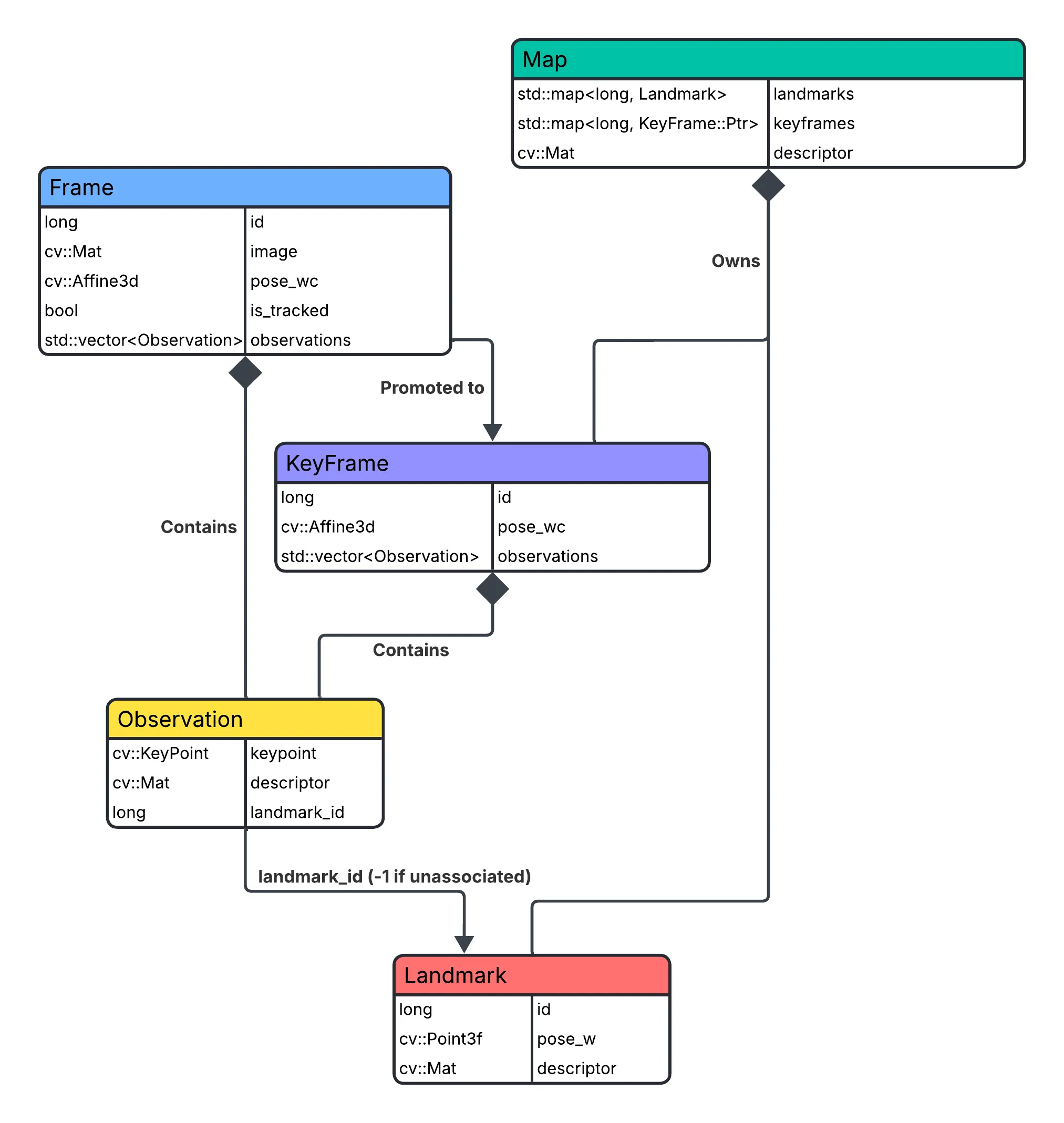

The system follows the structure pioneered by ORB-SLAM, built around four core data structures.

Observation

The fundamental unit connecting the 2D and 3D worlds. Every detected feature is stored as an Observation: a keypoint (pixel position), a descriptor (visual fingerprint), and a landmark_id. A landmark_id of -1 means the feature hasn’t been associated with a 3D map point yet — it’s a candidate waiting to be triangulated. This single field encodes whether a detection is “known to the map” or not, which drives most of the pipeline logic downstream.

Because different stages need different subsets, queries against a frame’s observations use an ObservationFilter: ALL, WITH_LANDMARKS (for PnP pose estimation), or WITHOUT_LANDMARKS (for triangulation candidates). Rather than scattering filter logic across the codebase, this makes the intent explicit at every call site.

Frame and KeyFrame

A Frame is a transient object — it holds the raw image, the camera pose (T_wc, camera-to-world transform), and a list of Observations. It exists only long enough to run tracking and decide whether it becomes a keyframe. An is_tracked flag marks when the frame has passed the tracking stage, after which the keypoint fields are considered invalid to prevent misuse.

A KeyFrame is what a Frame becomes when it’s promoted to a permanent map anchor. The key difference: KeyFrame does not store the raw image. Images are large; a map accumulating thousands of keyframes can’t afford to keep them all. What it does keep is an unordered_map<long, size_t> from landmark_id to observation index, enabling O(1) lookups when the tracker needs to find which pixel a given 3D point was last seen at.

Landmark

A Landmark is a triangulated 3D point in world coordinates, plus the descriptor that was used to detect it. Storing the descriptor alongside the 3D position is forward-looking — it’s what would enable future feature matching for loop closure or relocalization, even though those aren’t implemented yet.

Map

The Map owns all KeyFrames and Landmarks, keyed by their globally unique IDs. Its most important method is get_observation_to_landmark_point_correspondences: given a set of observations, it resolves each landmark_id to its 3D world position and returns the paired 2D-3D point lists. This is the direct input to PnP — the bridge that lets the tracker turn “pixels I’m seeing now” into “here’s where the camera must be.”

Two-Stage Pipeline

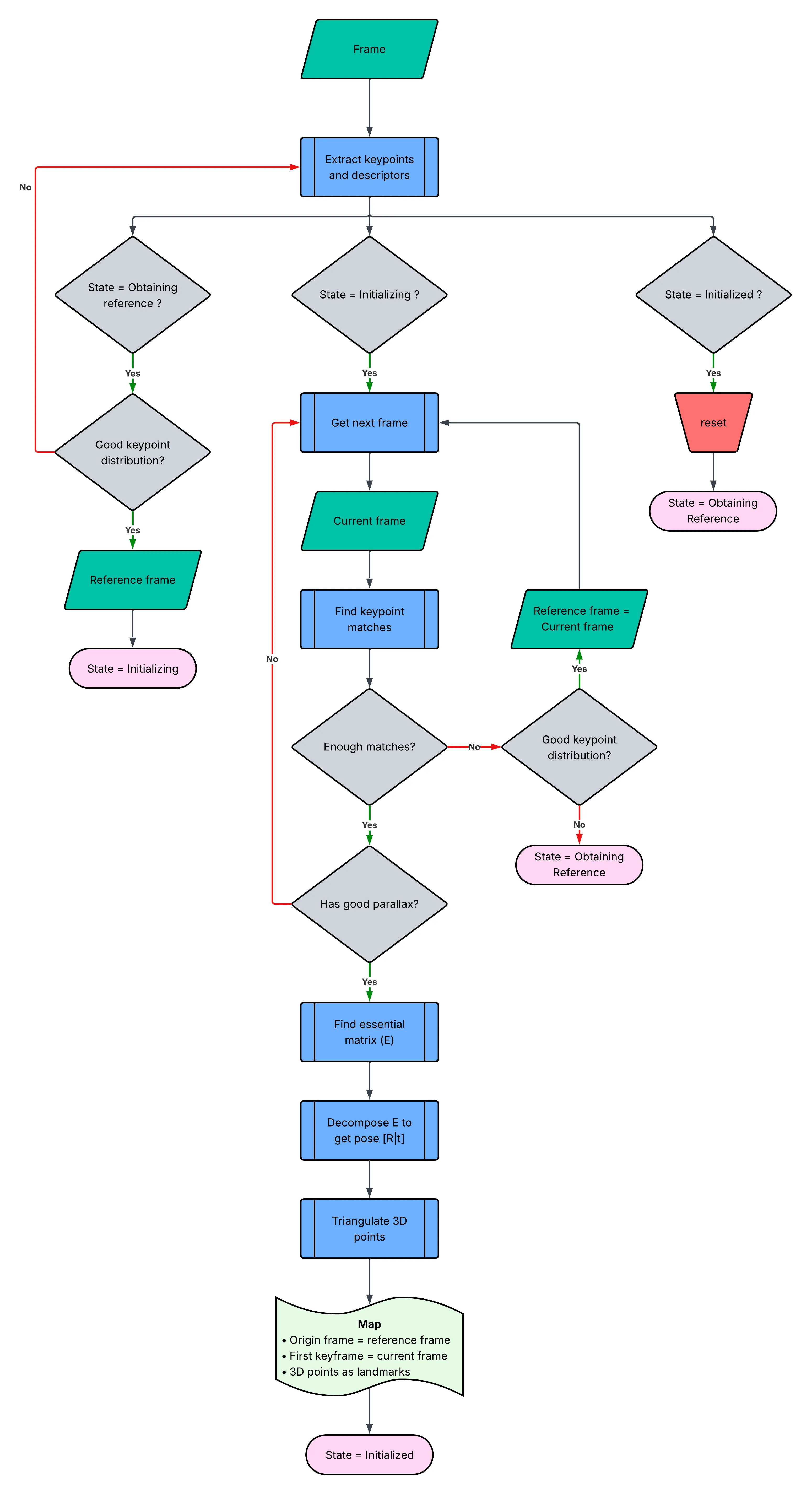

Initialization

The system can’t estimate motion until it has a 3D map to work with. Initialization solves this chicken-and-egg problem from just two views.

The initializer waits for a frame pair with sufficient baseline — the camera must have moved enough for the two views to have real parallax. Once a suitable pair is found, it computes the Essential Matrix from the matched feature correspondences, decomposes it to recover the relative rotation and translation, and triangulates the matched points into the first set of 3D landmarks. These two frames become the first Keyframes, and the map is bootstrapped.

A key subtlety here is the H/F model selection: the system fits both a Homography (for planar scenes) and a Fundamental Matrix (for general scenes) and uses a score ratio to decide which model better explains the data. This matters because a purely planar scene makes the Essential Matrix degenerate.

Tracking

![]()

Once initialized, every incoming frame goes through the same loop:

- Lucas-Kanade optical flow tracks the 2D positions of existing landmarks from the last frame into the current frame — fast, sub-pixel accurate, and requires no descriptor matching. Each tracked feature is stored as a

MatchDatarecord preserving both the original observation index in each frame and the 2D pixel coordinates. RANSAC returns an inlier mask, and those original indices are what map the surviving inliers back to specificObservations to update their landmark associations. - PnP + RANSAC solves for the current camera pose given the 2D-3D correspondences assembled by

Map::get_observation_to_landmark_point_correspondences. RANSAC is essential here: optical flow is noisy and some tracks will be wrong, and a single outlier can corrupt a pose estimate without it. - A keyframe decision is evaluated: has enough time passed, has the camera moved far enough, or has tracking quality dropped enough to warrant a new anchor? If yes, new 3D points are triangulated between the current frame and the last Keyframe, and the frame is promoted to the map.

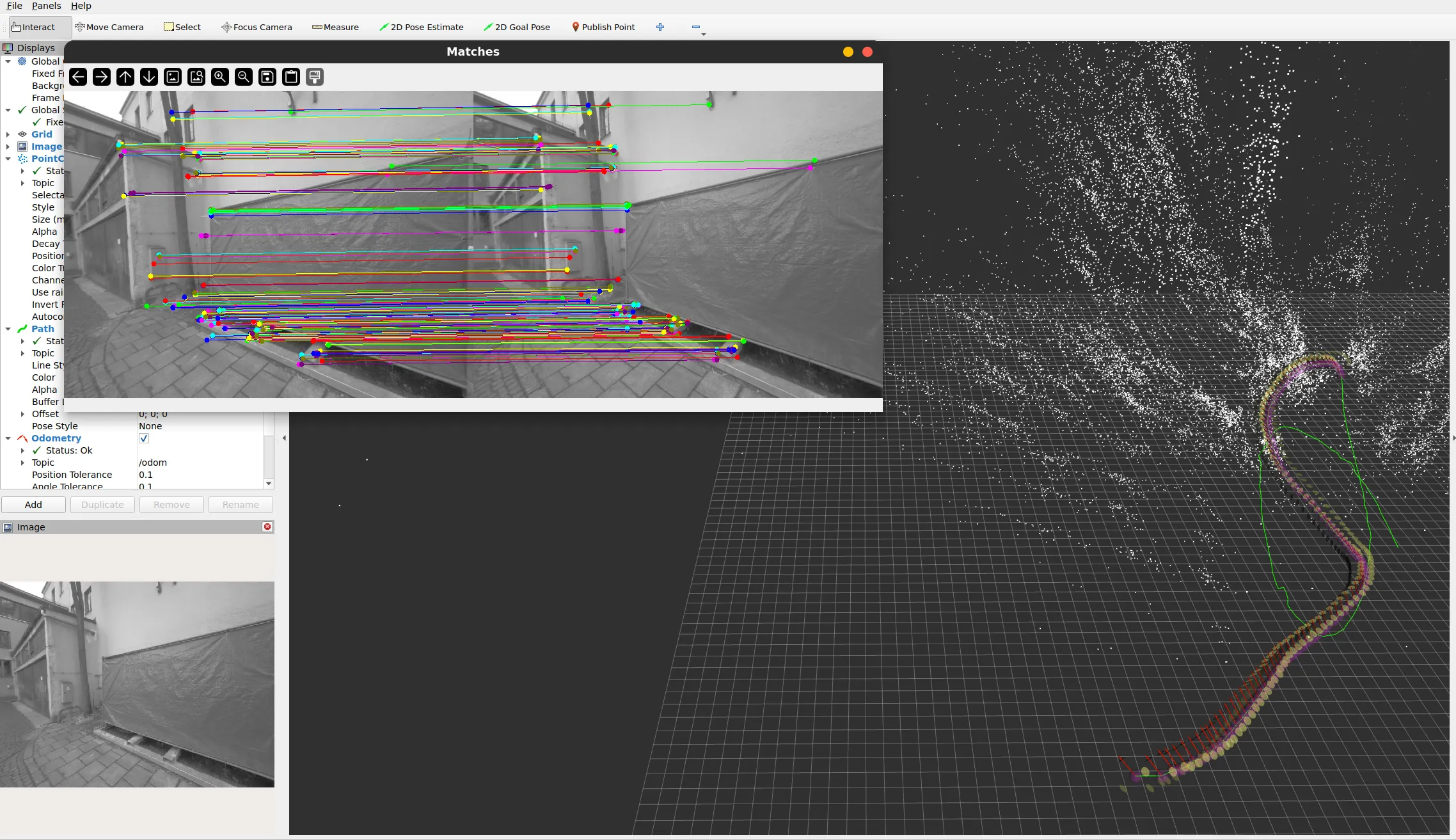

The result is an estimated 6-DOF trajectory and a sparse 3D point cloud, published as standard ROS2 messages and visualized live in RViz2.

What I Learned

Building this from scratch rather than using an existing library forced engagement with decisions that are usually hidden:

- Why optical flow breaks down at large displacements and why keyframe spacing matters for keeping it reliable.

- How sensitive monocular VO is to the quality of initialization — a bad initial triangulation poisons the entire subsequent trajectory.

- The trade-off between keyframe frequency and map size: more keyframes means better coverage but higher triangulation cost and memory use.

- Why drift is unavoidable without a backend: PnP errors accumulate frame by frame with no mechanism to correct them globally.

What’s Next

This is a pure VO front-end — it has no memory of where it’s been. Two additions would turn it into a full SLAM system:

- Bundle Adjustment — a backend optimization that jointly refines all camera poses and landmark positions to minimize reprojection error globally, correcting accumulated drift.

- Loop Closure — recognizing a previously visited location and using that constraint to correct the trajectory, preventing long-term drift.

Stack: C++17 · ROS2 Jazzy · OpenCV · RViz2